1.2 Installing ZENworks Orchestrator to a High Availability Environment

This section includes information to help you install ZENworks Orchestrator Server components in a High Availability environment. The sequence below is the supported method for configuring this environment.

-

Section 1.2.2, Install the High Availability Pattern for SLES 10

-

Section 1.2.3, Configure Nodes with Time Synchronization and Install Heartbeat to Each Node

-

Section 1.2.5, Install and Configure ZENworks Orchestrator on the First Clustered Node

-

Section 1.2.6, Run the High Availability Configuration Script

-

Section 1.2.9, Test the Failover of the ZENworks Orchestrator Server in a Cluster

NOTE:Upgrading from earlier versions of ZENworks Orchestrator (including an earlier installation of version 1.3) to a high availability environment is not supported.

If you plan to use ZENworks Virtual Machine Management in a high availability environment, see Section 1.2.10, Install and Configure other ZENworks Orchestrator Components to the High Availability Grid and Section 1.2.11, Creating an NFS Share for VM Management to Use in a High Availability Environment.

1.2.1 Meet the Prerequisites

The environment where ZENworks Orchestrator Server is installed must meet the hardware and software requirements for high availability. This section includes the following information to help you understand those requirements.

Hardware Requirements for Creating a High Availability Environment

The following hardware components are required for creating a high availability environment for ZENworks Orchestrator:

-

A minimum of two SLES 10 SP1 (or greater) physical servers, each having dual Network Interface Cards (NICs). These servers are the nodes of the cluster where the ZENworks Orchestrator Server is installed and are a key part of the high availability infrastructure.

-

A Fibre Channel or ISCSI Storage Area Network (SAN)

-

A STONITH device, to provide node fencing. A STONITH device is a power switch which the cluster uses to reset nodes that are considered dead. Resetting non-heartbeating nodes is the only reliable way to ensure that no data corruption is performed by nodes that hang and only appear to be dead. For more information about setting up STONITH, see, the Configuring Stonith section of the SLES 10 Heartbeat Guide.

Software Requirements for Creating a High Availability Environment

The following software components are required for creating a high availability environment for ZENworks Orchestrator:

-

The High Availability pattern on the SLES 10.x RPM install source, which includes

-

The Heartbeat v2 software package, a high-availability resource manager that supports multinode failover. This should include all available online updates installed to all nodes that will be part of the Heartbeat cluster.

-

The Oracle Cluster File System 2 (OCFS2), a parallel cluster file system that offers concurrent access to a shared file system. See Section 1.2.4, Set Up OCFS2 for more information.

SLES 10.x integrates these open source storage technologies (Heartbeat 2 and OCFS) in a High Availability installation pattern, which, when installed and configured, is known as the Novell High Availability Storage Infrastructure. This combined technology automatically shares cluster configuration and coordinates cluster-wide activities to ensure deterministic and predictable administration of storage resources for shared-disk-based clusters.

-

-

DNS installed on the nodes of the cluster for resolving the cluster hostname to the cluster IP.

-

ZENworks Orchestrator Server installed on all nodes of the cluster (a two or three node configuration is recommended)

-

(optional) VM Builder/VM Warehouse Server installed on a non-clustered server (for more information, see Section 1.2.10, Install and Configure other ZENworks Orchestrator Components to the High Availability Grid.

-

(optional) Orchestrator Monitoring Server installed on a non-clustered server (for more information, see Section 1.2.10, Install and Configure other ZENworks Orchestrator Components to the High Availability Grid.

1.2.2 Install the High Availability Pattern for SLES 10

The High Availability install pattern is included in the distribution of SLES 10 and later. Use YaST2 (or the command line, if you prefer) to install the packages that are associated with the high availability pattern to each physical node that is to participate in the ZENworks Orchestrator cluster.

NOTE:The High Availability pattern is included on the SLES 10 install source, not the ZENworks Orchestrator install source.

The packages associated with high availability include:

-

drbd (Distributed Replicated Block Device

-

EVMS high availability utilities

-

The Heartbeat subsystem for high availability on SLES

-

Heartbeat CIM provider

-

A monitoring daemon for maintaining high availability resources that can be used by Heartbeat

-

A plug-in and interface loading library used by Heartbeat

-

An interface for the STONITH device

-

OCFS2 GUI tools

-

OCFS2 Core tools

For more information, see Installing and Removing Software in the SLES 10 Installation and Administration Guide.

1.2.3 Configure Nodes with Time Synchronization and Install Heartbeat to Each Node

When you have installed the High Availability packages to each node of the cluster, you need to configure the Network Timing Protocol (NTP) and Heartbeat 2 clustering environment on each physical machine that participates in the cluster.

Configuring Time Synchronization

To configure time synchronization, you need to configure the nodes in the cluster to synchronize to a time server outside the cluster. The cluster nodes use the time server as their time synchronization source.

NTP is included as a network service in SLES 10 SP1. Use the time synchronization instructions in the SLES 10 Heartbeat Guide to help you configure each cluster node with NTP.

Configuring Heartbeat 2

Heartbeat 2 is an open source server clustering system that ensures high availability and manageability of critical network resources including data, applications, and services. It is a multinode clustering product for Linux that supports failover, failback, and migration (load balancing) of individually managed cluster resources.

Heartbeat packages are installed with the High Availability pattern on the SLES 10 SP1 install source. For detailed information about configuring Heartbeat 2, see the installation and setup instructions in the SLES 10 Heartbeat Guide.

An important value you need to specify in order for Heartbeat to be enabled for High Availability is configured in the field on the settings page of the Heartbeat console (hb_gui).

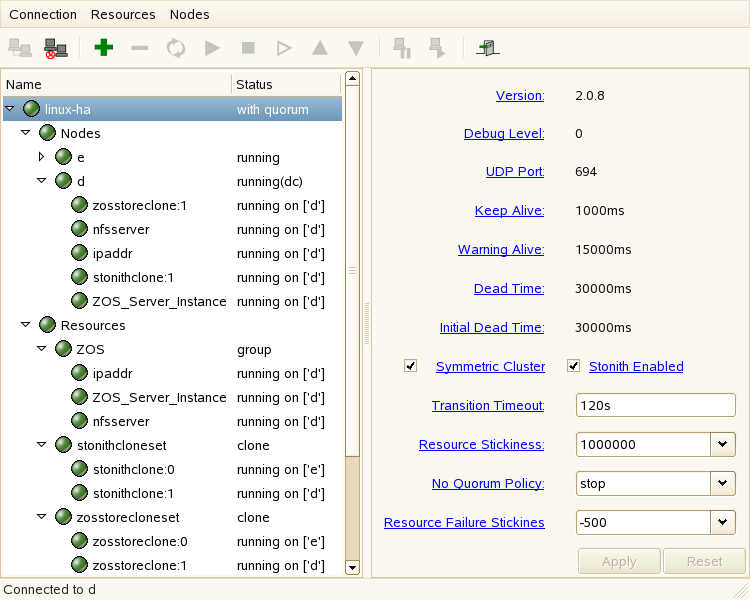

Figure 1-2 The Main Settings Page in the Heartbeat2 Graphical Interface

The value in this field controls how long Heartbeat waits for services to start. The default value is 20 seconds. The ZENworks Orchestrator Server requires more time than this to start. We recommend that you specify the value in this field at 120s. More time might be required if your Orchestrator grid is very large.

1.2.4 Set Up OCFS2

OCFS2 is a general-purpose journaling file system that is fully integrated in the Linux 2.6 kernel and later and shipping with SLES 10.x. OCFS2 allows you to store application binary files, data files, and databases on devices in a SAN. All nodes in a cluster have concurrent read and write access to the file system. A distributed lock manager helps prevent file access conflicts. OCFS2 supports up to 32,000 subdirectories and millions of files in each directory. The O2CB cluster service (a driver) runs on each node to manage the cluster.

To set up the high availability environment for ZENworks Orchestrator, you need to first install the High Availability pattern in YaST (this includes the ocfs2-tools and ocfs2console software packages) and configure the Heartbeat 2 cluster management system on each physical machine that participates in the cluster, and then provide a SAN in OCFS2 where the Orchestrator files can be stored. For information on setting up and configuring OCFS2, see the Oracle Cluster File System 2 section of the SLES 10 Administration Guide.

Shared Storage Requirements for Creating a High Availability Environment

If you want data to be highly available, we recommend that you set up a Fibre Channel Storage Area Network (SAN) to be used by your ZENworks Orchestrator cluster.

SAN configuration is beyond the scope of this document. For information about setting up a SAN, see the Oracle Cluster File System 2 documentation in the SLES 10 Administration Guide.

IMPORTANT:ZENworks Orchestrator requires a specific mount point for file storage on the SAN. Use /zos (in the root directory) for this mount point.

1.2.5 Install and Configure ZENworks Orchestrator on the First Clustered Node

This section includes information about two possible scenarios for preparing to use ZENworks Orchestrator in an HA environment:

Installing the Orchestrator Server YaST Patterns on the Node

NOTE:As you prepare to install ZENworks Orchestrator 1.3 and use it in a high availability environment, make sure that the requirements to do so are met. For more information, see Planning the Installation

in the Novell ZENworks Orchestrator 1.3 Installation and Getting Started Guide.

The ZENworks Orchestrator Server (Orchestrator Server) is supported on SUSE® Linux Enterprise Server 10 Service Pack 1 (SLES 10 SP1) only.

To install the ZENworks Orchestrator Server packages on the first node of the cluster:

-

Download the appropriate ZENworks Orchestrator Server ISO (32-bit or 64-bit) to an accessible network location.

-

(Optional) Create a DVD ISO (32-bit or 64-bit) that you can take with you to the machine where you want to install it or use a network install source.

-

Install ZENworks Orchestrator software:

-

Log in to the target SLES 10 SP1 server as root, then open YaST2.

-

In the YaST Control Center, click > , then click to display the Add-on Product Media dialog box.

-

In the Add-on Product Media dialog box, select the ISO media ( or ) to install.

-

(Conditional) Select , click , insert the DVD, then click .

-

(Conditional) Select , click, select the check box, browse to ISO on the file system, then click .

-

-

Read and accept the license agreement, then click to display YaST2.

-

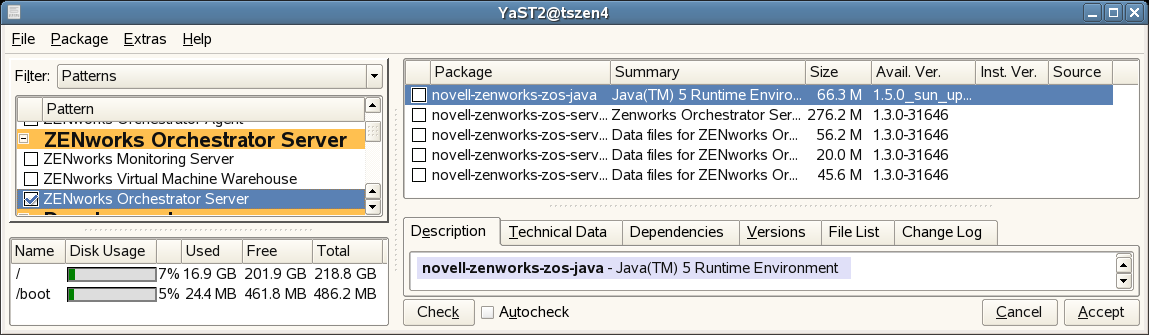

In the YaST2 drop-down menu select to display the install patterns available on the ZENworks Orchestrator ISO.

-

Select only the ZENworks Orchestrator Server installation pattern for installation on the first node. This pattern is the gateway between enterprise applications and resource servers. The Orchestrator Server manages computing nodes (resources) and the jobs that are submitted from applications to run on these resources.

HINT:If not already selected by default, you need to select the packages that are in the ZENworks Orchestrator Server pattern.

-

Click to install the packages.

-

Running the Orchestrator Installation/Configuration Script

Use the following procedure to finish the initial installation and configuration script for the first node in the cluster.

-

Configure the ZENworks Orchestrator Server components that you have installed. You can use one of two information gathering methods to perform the configuration:

HINT:Although the text-based configuration process detects which RPM patterns are installed, the GUI Configuration Wizard requires that you specify which components are to be configured.

-

(Conditional) Run the Orchestrator product configuration script.

-

Make sure you are logged in as root to run the configuration script.

-

Run the script, as follows:

/opt/novell/zenworks/orch/bin/config

When the script runs, the following information is initially displayed:

Welcome to Novell ZENworks Orchestrator. This program will configure Novell ZENworks Orchestrator 1.3 Select whether this is a new install or an upgrade i) install u) upgrade - - - - - - Selection [install]:

-

Press Enter (or enter i) to accept as a new installation and to display the next part of the script.

Select products to configure # selected Item 1) no ZENworks Monitoring Service (not installed) 2) yes ZENworks Orchestrator Server 3) no ZENworks Orchestrator Agent (not installed) 4) no ZENworks Orchestrator VM Builder (not installed) 5) no ZENworks Orchestrator VM Warehouse (not installed) Select from the following: 1 - 5) toggle selection status a) all n) none f) finished making selections q) quit -- exit the program Selection [finish]: -

Because you installed only the ZENworks Orchestrator Server, no other products need to be selected.

IMPORTANT:If you happened to install the VM Builder or the VM Warehouse on this server, do not configured them on this server.

Press Enter (or enter f) to finish the default selection and to display the next part of the script.

Gathering information for ZENworks Orchestrator Server configuration. . . Select whether this is this a standard or high-availability server configuration s) standard h) ha - - - - - - Selection [standard]:

-

Enter h to specify that this is a High Availability server configuration and to display the next part of the script.

-

Enter the fully-qualified cluster hostname or the IP address that is used for configuring the Orchestrator Server instance in a High Availability cluster.

The configuration script binds the IP address of the cluster to this server.

-

Enter a name for the Orchestrator grid.

This grid is an administrative domain container that contains all of the objects in your network or data center that the Orchestrator monitors and manages, including users, resources, and jobs. The grid name is displayed at the root of the tree in the Explorer Panel of the Orchestrator Console.

-

Enter a name for the ZENworks Orchestrator Administrator user.

This name is used to log in as the administrator of the ZENworks Orchestrator Server and the objects it manages.

-

Enter a password for the ZENworks Orchestrator Administrator user, then retype the password to validate it.

-

Choose whether to enable an audit database by entering either y or n.

ZENworks Orchestrator can send audit information to a relational database (RDBMS). If you enable auditing, you will need access to an RDBMS. If you use a PostgreSQL database, you can configure it for use with Orchestrator auditing at this time. If you use a different RDBMS, you will have to configure it separately for use with Orchestrator.

-

Specify the full path to file containing the license key you received from Novell.

Example: opt/novell/zenworks/zos/server/license/key.txt

-

Specify the port you want the Orchestrator Server to use for the User Portal interface: an access to Orchestrator for users to manage jobs.

NOTE:If you plan to use ZENworks Monitoring outside your cluster, we recommend that you do not use the default port, 80.

-

Specify a port that you want to designate for the Administrator Information page. this page includes links to product documentation, agent and client installers, and product tools to help you understand and use the product. The default port is 8001.

-

Specify a port to be used for communication between the Orchestrator Server and the Orchestrator Agent. The default port is 8100.

-

Specify (yes or no) whether you want the Orchestrator Server to generate a PEM-encoded TLS certificate for secure communication between the server and the agent. If you choose not to generate a certificate, you need to provide the location of an existing certificate and key.

-

Specify the password to be used for the VNC on Xen hosts, then verify that password.

-

Specify whether to view (yes or no) or change (yes or no) the information you have supplied in the configuration script.

If you are satisfied with the summary of information (that is, you choose not to change it) and specify that choice, the configuration launches.

If you decide to change the information, the following choices are presented in the script:

Select the component that you want to change 1) ZENworks Orchestrator Server - - - - - - - - - - - - - - - - d) Display Summary f) Finish and Install

-

Specify 1 if you want to reconfigure the server.

-

Specify d if you want to review the configuration summary again.

-

Specify f if you are satisfied with the configuration and want to install using the specifications as they are.

-

-

-

(Conditional) Run the GUI Configuration Wizard.

NOTE:If you have already run the text-based configuration explained in Step 1.a, do not use the GUI Configuration Wizard.

-

Run the script for the ZENworks Orchestrator Configuration Wizard as follows:

/opt/novell/zenworks/orch/bin/guiconfig

The GUI Configuration Wizard launches.

IMPORTANT:If you only have a keyboard to navigate through the pages of the GUI Configuration Wizard, use the Tab key to shift the focus to a control you want to use (for example, a button), then press the space bar to activate that control.

-

Click to display the license agreement.

-

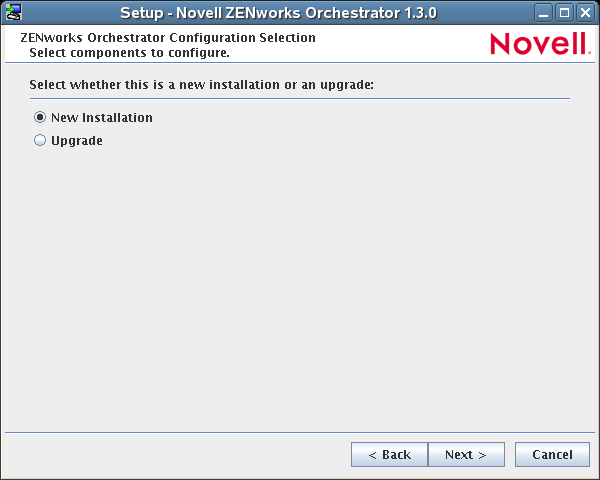

Accept the agreement, then click Next to display the installation type page.

-

Select , then click to display the ZENworks Orchestrator components page.

-

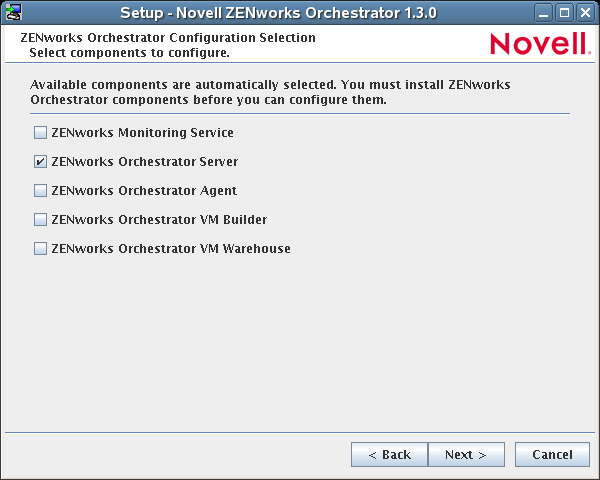

Select , then click to display the ZENworks Orchestrator components page.

The components page lists the ZENworks Orchestrator components that are available for configuration (already installed). By default, only the installed components (the ZENworks Orchestrator Server, in this case) are selected for configuration.

NOTE:If other Orchestrator patterns were installed by mistake, make sure that you deselect them now. As long as these components are not configured for use, there should be no problem with the errant installation.

-

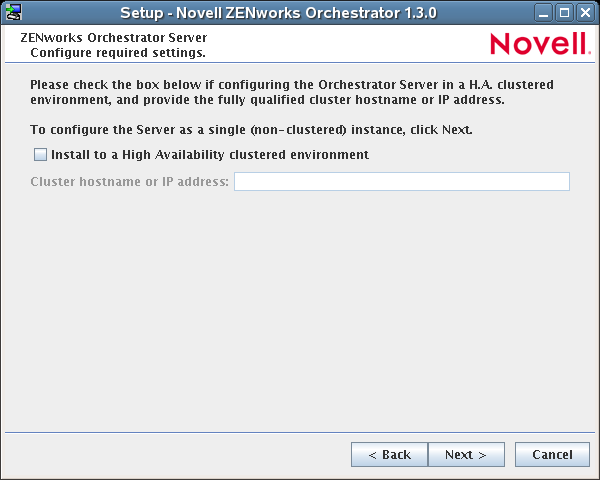

Click to display the High Availability configuration page.

-

Select to configure the server for high availability, then enter the hostname of IP address of the cluster in the field.

-

Click on the succeeding pages and provide information for the wizard to use during the configuration process. You can refer to the information in Table 1-1, Orchestrator Configuration Information for details about the configuration data that you need to provide. The GUI Configuration Wizard uses this information to build a response file that is consumed by the setup program inside the GUI Configuration Wizard.

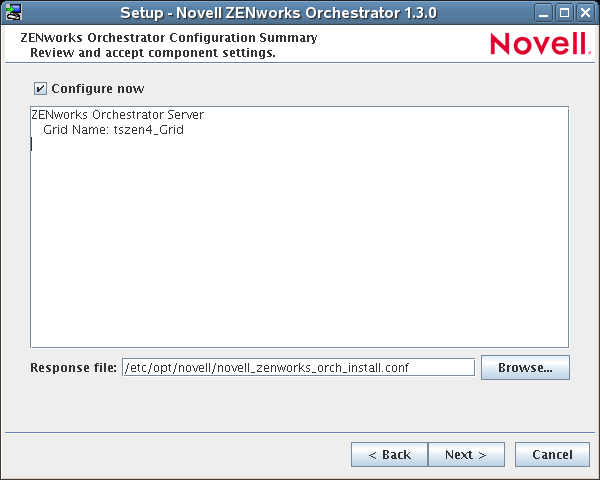

When you have finished answering the configuration questions in the wizard, the ZENworks Orchestrator Configuration Summary page is displayed.

IMPORTANT:Although this page of the wizard lets you navigate using the Tab key and spacebar, you need to use the Shift+Tab combination to navigate past the summary list. Click if you accidentally enter the summary list and re-enter the page to navigate to the control buttons.

By default, the check box on this page is selected. If you accept the default of having it selected, the wizard starts ZENworks Orchestrator and applies the configuration settings.

If you deselect the check box, the wizard writes out the configuration file to /etc/opt/novell/novell_zenworks_orch_install.conf without starting Orchestrator or applying the configuration settings. You can use this saved .conf file to start the Orchestrator Server and apply the settings. Do this either by running the configuration script manually or by using an installation script. Use the following command to run the configuration script:

/opt/novell/zenworks/orch/bin/config -rs <path_to_config_file>

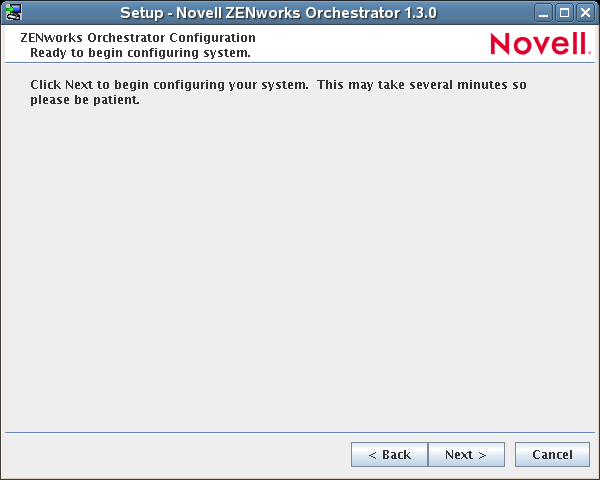

-

Click to display the following wizard page.

-

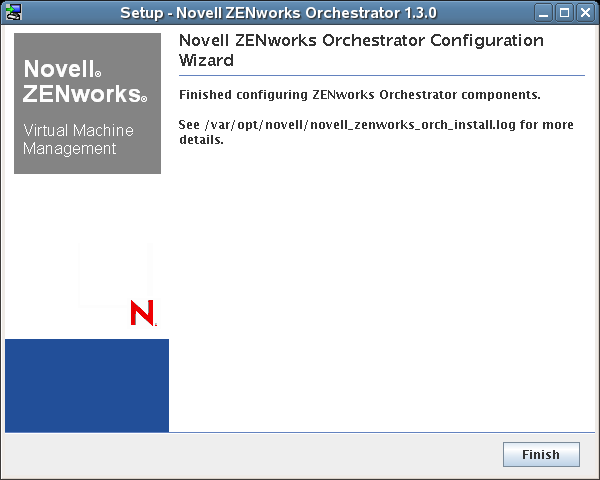

Click to launch the configuration script. When the configuration is finished, the following page is displayed:

-

Click to close the configuration wizard.

-

-

-

Open the configuration log file (/var/opt/novell/novell_zenworks_orch_install.log) to make sure that the components were correctly configured.

You might want to change the configuration if you change your mind about some of the parameters you provided in the configuration process. To do so, you can rerun the configuration and change your responses during the configuration process.

The configuration script performs the following functions in seqiemce on the ZENworks Orchestrator:

-

Binds the cluster IP on this server by issuing the following command internally:

IPaddr2 start <IP_address_you_provided>

IMPORTANT:Make sure you configure DNS to resolve the cluster hostname to the cluster IP.

-

Configures the Orchestrator Server.

-

Shuts down the Orchestrator Server because you specified that this is a High-Availability configuration

-

Unbinds the cluster IP on this server by issuing the following command internally:

IPaddr2 stop <IP_address_you_provided>

-

When these steps are completed, the first node of the Orchestrator Server cluster is set up.

ZENworks Orchestrator Configuration Information

The following table includes the information required by the ZENworks Orchestrator configuration (config) and the configuration wizard (guiconfig) when configuring the Orchestrator Server component for High Availability. The information is organized in this way to make it readily available. The information is listed in the order that it is requested by the configuration script or wizard.

Table 1-1 Orchestrator Configuration Information

|

Configuration Information |

Explanation |

|---|---|

|

Orchestrator Server |

Because the ZENworks Orchestrator Server must always be installed for a full Orchestrator system, the following questions are always asked when you have installed server patterns prior to the configuration process:

|

|

Orchestrator Server (continued) |

|

|

Configuration Summary |

When you have completed the configuration process, you have the option of viewing a summary of the configuration information.

|

1 This configuration parameter is considered an advanced setting for the Orchestrator Server in the ZENworks Orchestrator Configuration Wizard. If you select the check box in the wizard, the setting is configured with normal defaults. Leaving the check box deselected lets you have the option of changing the default value.

2 This configuration parameter is considered an advanced setting for the Orchestrator Server in the ZENworks Orchestrator Configuration Wizard. If you select the check box in the wizard, this parameter is listed, but default values are provided only if the previous value is manually set to .

1.2.6 Run the High Availability Configuration Script

Before you run the High Availability Configuration Script, make sure that you have installed the ZENworks Orchestrator Server to a single node of your high availability cluster. For more information, see Section 1.2.5, Install and Configure ZENworks Orchestrator on the First Clustered Node.

IMPORTANT:The High Availability Configuration Script asks for the mount point on the Fibre Channel SAN. Make sure that you have that information (/zos) before you run the script.

The high availability script, zos_server_ha_post_config, is located in /opt/novell/zenworks/orch/bin with the other configuration tools. You need to run this script on the first node of the cluster (that is, the node where you installed ZENworks Orchestrator Server) as the next step in setting up ZENworks Orchestrator to work in a high availability environment.

The script performs the following functions:

-

Verifies that the Orchestrator Server is not running

-

Moves the Orchestrator files to shared storage (first node of the cluster)

-

Creates symbolic links pointing to the location of shared storage (all nodes of the cluster)

The High Availability Configuration Script must be run on all nodes of the cluster. Make sure that you follow the prompts in the script exactly; do not misidentify a secondary node in the cluster as the primary node.

1.2.7 Install and Configure Orchestrator Server Packages on Other Nodes in the Cluster for High Availability

When you have followed the steps to set up the primary node in your planned cluster, you need to set up the other nodes that you intend to use for failover in that cluster. Use the following sequence as you set up other cluster nodes (the sequence is nearly identical to setting up the primary node):

-

Make sure that the SLES 10 SP1 nodes have the High Availability pattern. For information, see Section 1.2.2, Install the High Availability Pattern for SLES 10.

-

Make sure that the SLES 10 SP1 nodes have been configured with time synchronization. For information, see Section 1.2.3, Configure Nodes with Time Synchronization and Install Heartbeat to Each Node.

-

Set up OCFS2 on each node so that the nodes can communicate with the SAN, making sure to designate /zos as the shared mount point. For more information, see Section 1.2.4, Set Up OCFS2.

-

Install ZENworks Orchestrator Server packages on this node. Use the steps as described in Installing the Orchestrator Server YaST Patterns on the Node.

NOTE:Do not run the initial configuration script (config or guiconfig) on any other node than the primary node.

-

Copy the license file (key.txt) from the first node to the /opt/novell/zenworks/zos/server/license/ directory on this node.

-

Run the High Availability Configuration script on this node, as described in Run the High Availability Configuration Script. This creates the symbolic link to the file paths of the SAN.

When you have installed and configured other nodes in the cluster, you are ready to test the failover of the ZENworks Orchestrator Server in the high-availability cluster.

1.2.8 Create the Cluster Resource Group

The resource group creation script, zos_server_ha_resource_group, is located in /opt/novell/zenworks/orch/bin with the other configuration tools. You can run this script on the first node of the cluster to set up the cluster resource group. If you want to set up the resource group using Heartbeat (GUI console or command line tool), running the script is optional.

The script performs the following functions:

-

Obtains the DNS name from the ZENworks Orchestrator configuration file.

-

Creates the cluster resource group.

-

Configures resource stickiness to avoid unnecessary failbacks.

The zos_server_ha_resource_group script prompts you for the IP address of the Orchestrator Server cluster. The script then adds this address to a Heartbeat2 Cluster Information Base (CIB) XML template called cluster_zos_server.xml and uses the following command to creates the cluster resource group:

/usr/sbin/cibadmin -o resources -C -x $XMLFILE

The CIB XML template is located at The template is located at /opt/novell/zenworks/orch/bin/ha/cluster_zos_server.xml. An unaltered template sample is shown below:

<group id="ZOS_Server">

<primitive id="ZOS_Server_Cluster_IP" class="ocf" type="IPaddr2" provider="heartbeat">

<instance_attributes>

<attributes>

<nvpair name="ip" value="$CONFIG_ZOS_SERVER_CLUSTER_IP"/>

</attributes>

</instance_attributes>

</primitive>

<primitive id="ZOS_Server_Instance" class="lsb" type="novell-zosserver" provider="heartbeat">

<instance_attributes id="zos_server_instance_attrs">

<attributes>

<nvpair id="zos_server_target_role" name="target_role" value="started"/>

</attributes>

</instance_attributes>

<operations>

<op id="ZOS_Server_Status" name="status" description="Monitor the status of the ZOS service" interval="60" timeout="15" start_delay="15" role="Started" on_fail="restart"/>

</operations>

</primitive>

</group>

The template shows that a cluster resource group comprises these components:

-

The ZENworks Orchestrator Server

-

The ZENworks Orchestrator Server cluster IP address

-

The NFS Server

-

A Dependency on the cluster file system resource group that you already created

-

Resource stickiness to avoid unnecessary failbacks

When you have installed and configured the nodes in the cluster and created a cluster resource group, use the Heartbeat tools to start the cluster resource group. You are then ready to test the failover of the ZENworks Orchestrator Server in the high-availability cluster.

1.2.9 Test the Failover of the ZENworks Orchestrator Server in a Cluster

You can optionally simulate a failure of the Orchestrator Server by powering off or performing a shutdown of the server. After approximately 30 seconds, the clustering software detects that the primary node is no longer functioning, binds the IP address to the failover server, then starts the failover server in the cluster.

Access the ZENworks Orchestrator Administrator Information Page to verify that the Orchestrator Server is installed and running (stopped or started). Use the following URL to open the page in a Web browser:

http://DNS_name_or_IP_address_of_cluster:8001

The Administrator Information page includes links to separate installation programs (installers) for the ZENworks Orchestrator Agent (Orchestrator Agent) and the ZENworks Orchestrator Clients (Orchestrator Clients). The installers are used for various operating systems.

1.2.10 Install and Configure other ZENworks Orchestrator Components to the High Availability Grid

To install and configure other ZENworks Orchestrator components ( including the Orchestrator Agent, the Monitoring Agent, the Monitoring Server, the VM Builder, or the VM Warehouse) on servers that authenticate to the cluster, you need to do the following:

-

Determine which components you want to install, remembering these dependencies

-

All non-agent Orchestrator components must be installed to a SLES 10 SP1 server, a RHEL 4 server, or a RHEL 5 server.

-

The ZENworks Orchestrator Agent must be installed to a SLES 10 SP1 server, a RHEL 4 server, a RHEL 5 server, or a Windows (NT, 2000, XP) server.

-

A VM Warehouse must be installed on the same server as a VM Builder. A VM Builder can be installed independent of the VM Warehouse on its own server.

-

-

Use Yast2 to install the Orchestrator packages of your choice to the network server resources of your choice. For more information, see

Installing and Configuring ZENworks Orchestrator Components

orInstalling the VM Management Console

in the Novell ZENworks Orchestrator 1.3 Installation and Getting Started Guide.If you want to, you can download the Orchestrator Agent or clients from the Administrator Information page and install them to a network resource as directed in

Independent Installation of the Agent and Clients

in the Novell ZENworks Orchestrator 1.3 Installation and Getting Started Guide. -

Run the text-based configuration script or the GUI Configuration Wizard to configure the Orchestrator components you have installed (including any type of installation of the agent). As you do this, you will need to remember the hostname of the Orchestrator server (that is, the primary Orchestrator Server node), and the administrator name and password of this server. For more information, see

Installing and Configuring ZENworks Orchestrator Components

orInstalling the VM Management Console

in the Novell ZENworks Orchestrator 1.3 Installation and Getting Started Guide.

It is important to understand that virtual machines under the management of ZENworks Orchestrator are also highly available — the loss of a host causes ZENworks Orchestrator to re-provision it elsewhere. This is true as long as the constraints in Orchestrator allow it to re-provision (for example, if the virtual machine image is on shared storage).

IMPORTANT:If you want to use Virtual Machine Management (that is, the VM Warehouse and the VM Builder) in your High Availability environment, you need to further configure the environment to cache the VM images. For more information, see Section 1.2.11, Creating an NFS Share for VM Management to Use in a High Availability Environment.

1.2.11 Creating an NFS Share for VM Management to Use in a High Availability Environment

Use the following procedure to create an NFS share when the VM Warehouse and Builder are installed on a machine outside of the cluster, where the ZENworks Orchestrator Server is installed.

IMPORTANT:You must execute these steps after you run the High Availability Configuration Script (see Section 1.2.6, Run the High Availability Configuration Script) on all nodes of the cluster.

-

Create the required directories on one node in the cluster where the Orchestrator Server is installed.

-

At the command line, run the following command:

mkdir /zos/server/dataGrid/files/warehouse/import

-

At the command line, run the following command:

mkdir /zos/server/dataGrid/files/warehouse/export

-

-

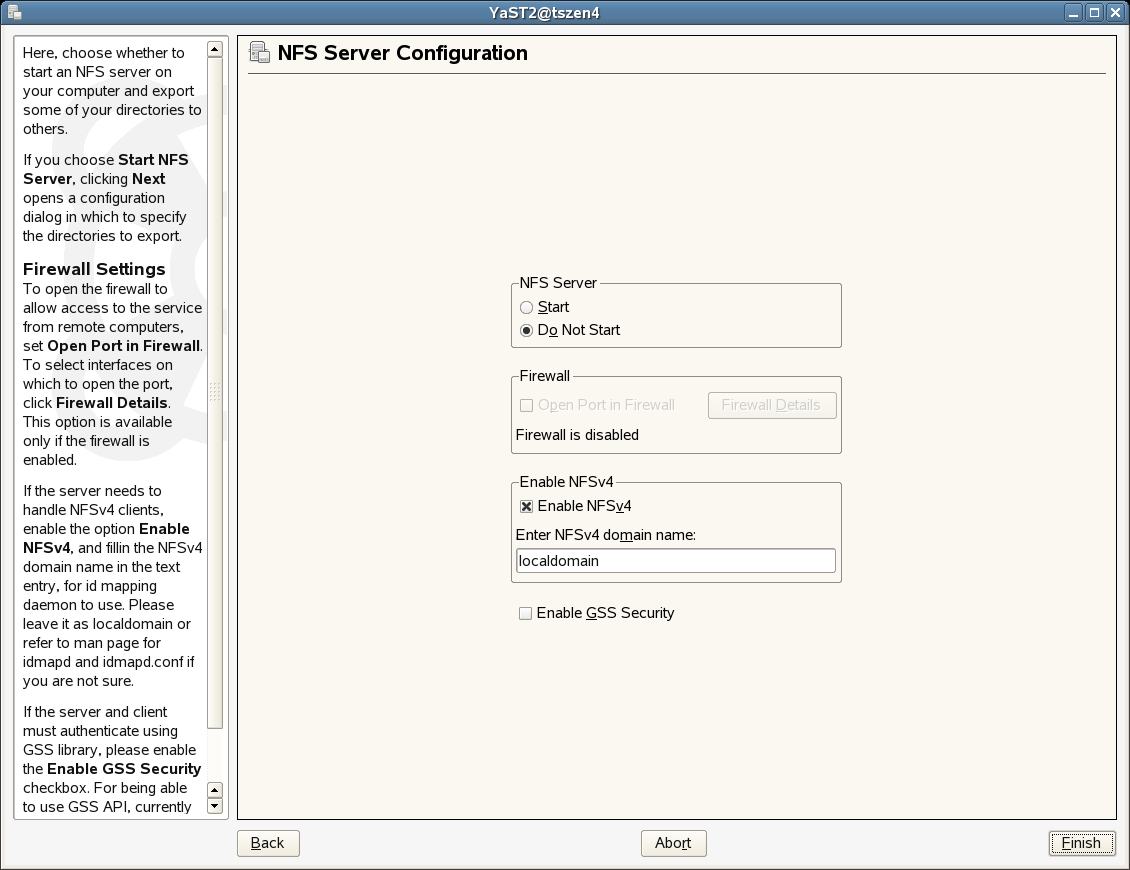

Start the NFS Server on each node in the cluster where the Orchestrator Server is installed.

-

At the command line, run the following command:

yast2 nfs_server

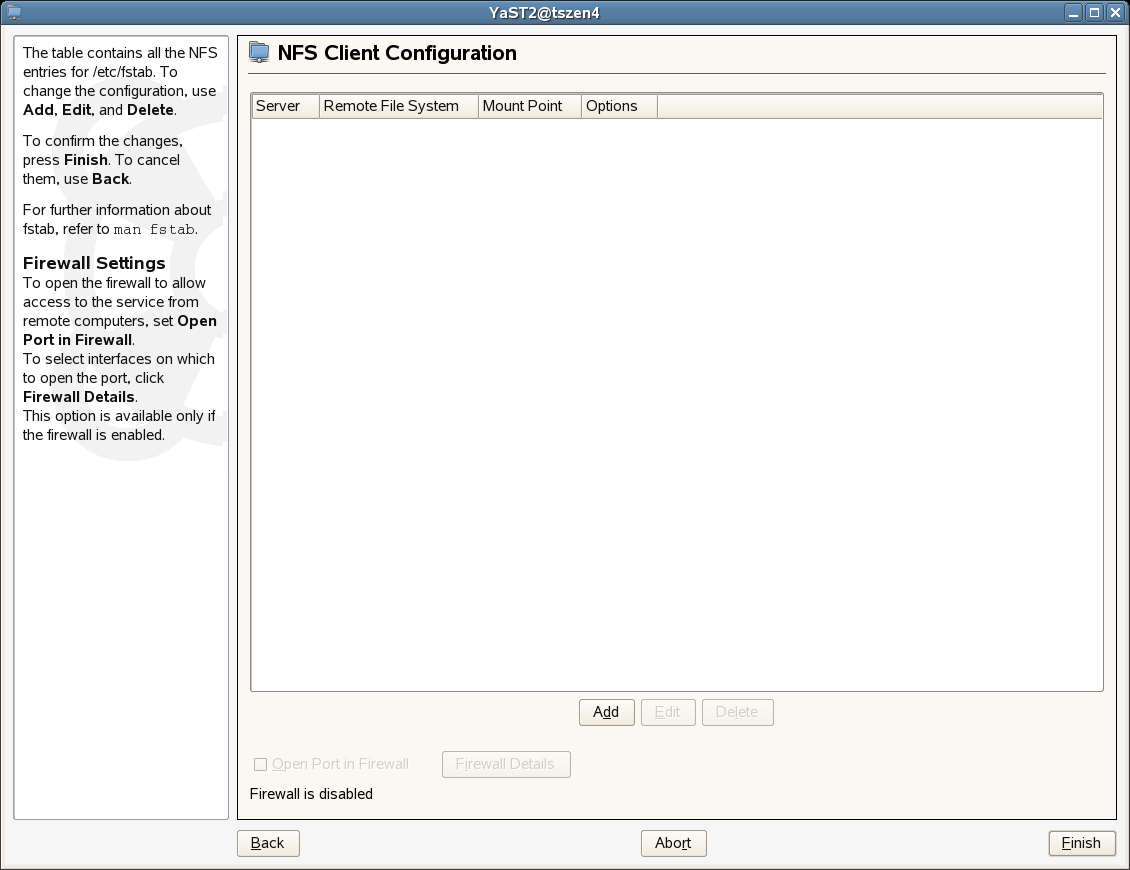

The NFS Server Configuration utility is displayed.

NOTE:Make sure that the firewall is disabled. If it is enabled, select the check box.

Do not select .

-

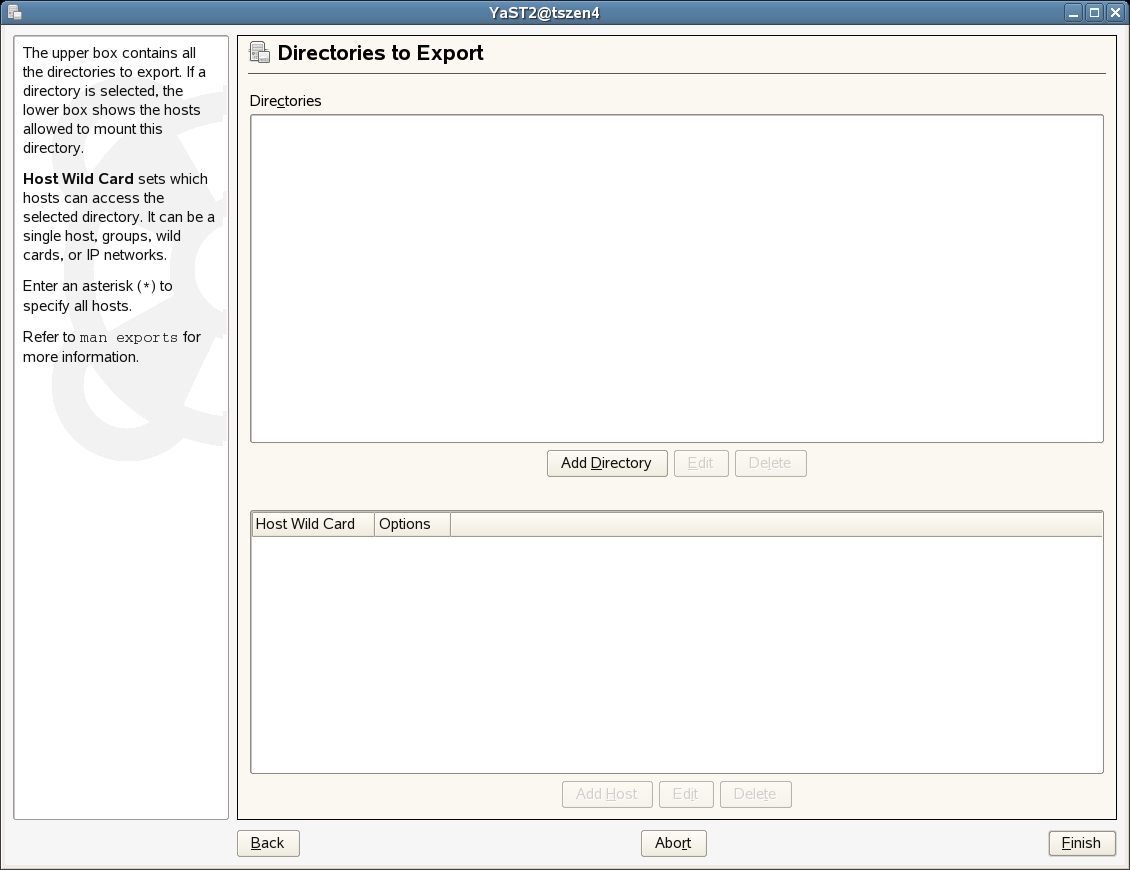

In the NFS Server Configuration utility, select , deselect , then click to display the Directories to Export page.

-

On the Directories to Export page, click , add /zos/server/dataGrid/files/warehouse as the directory path, then click to display a browser popup box.

-

In the popup box, fill in the fields:

Host Wild Card: Enter the IP address of the VM Warehouse Server.

Options: Edit the options string so that it appears as rw,sync,no_root_squash

-

Click to save the configuration and start the NFS Server.

-

-

At the command line on each node in the cluster where the Orchestrator Server is installed, use the following commands to turn off the NFS Server and NFS boot:

chkconfig nfsserver off chkconfig nfsboot off

-

Create the following script and run it on each node in the cluster where the Orchestrator Server is installed:

if [ -d /zos/nfs ] then rm -rf /var/lib/nfs else mv /var/lib/nfs /zos/ fi ln -sf /zos/nfs /var/lib sed -i s"/OPTIONS=\" -q\"/OPTIONS=\" -q -v $cluster_IP_address\"/" /etc/init.d/nfsbootNOTE:Remember to add the cluster IP address in the script on the line indicated.

-

On only one of the nodes in the cluster where the ZENworks Orchestrator is installed, set up the NFS High Availability Service. The cluster must be running when you do this.

-

From the command line of this server, run the following command:

cat > /root/nfs.xml <<EOF

The result is a display of the nfs.xml file:

<group id="ZOS_Server"> <primitive id="nfsserver" class="lsb" type="nfsserver" provider="heartbeat"> </primitive> </group> EOF

-

From the command line of this server, run the following command:

cibadmin -U -x /root/nfs.xml

-

-

Install the VM Warehouse/Builder component on a resource that is not inside the cluster. For more information on installing and configuring this component, see

Installing and Configuring ZENworks Orchestrator Components

orInstalling the VM Management Console

in the Novell ZENworks Orchestrator 1.3 Installation and Getting Started Guide. -

Mount the VM Warehouse share located inside the cluster using one of the following methods.

-

(Method 1) On the command line of the VM Warehouse/Builder Server, run the following command:

yast2 nfs add spec="<cluster_IP_address>:/zos/server/dataGrid/files/warehouse" file="/var/opt/novell/zenworks/zos/server/dataGrid/files/warehouse"

-

(Method 2) On the command line of the VM Warehouse/Builder Server, run the following command:

yast2 nfs

This command displays the NFS Client Configuration utility.

-

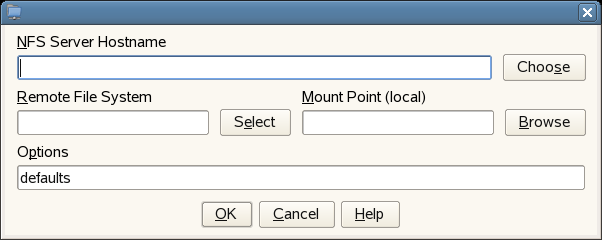

In the NFS Client Configuration utility, click to open a dialog box you can use to specify the share information.

-

In the dialog box, fill in two of the fields:

NFS Server Hostname: Enter the IP Address of the High Availability cluster, for example:

10.255.255.255

Remote File System: Enter the name of the remote file system (that is, the shared directory) you created:

/zos/server/dataGrid/files/warehouse

Mount Point (local): Enter the local mount point:

/var/opt/novell/zenworks/zos/server/dataGrid/files/warehouse

-

Click , then click .

-

NOTE:For information about creating an NFS Share for the VM Warehouse/Builder in a non-clustered environment, see Step 9 in Installation and Configuration Steps

in the Novell ZENworks Orchestrator 1.3 Installation and Getting Started Guide.