4.1 Requirements for BCC 1.2.1 for OES 2 SP2 Linux

The requirements in this section must be met prior to installing Novell Business Continuity Clustering 1.2.1 for OES 2 SP2 Linux.

4.1.1 Business Continuity Clustering License

Novell Business Continuity Clustering software requires a license agreement for each business continuity cluster. For purchasing information, see Novell Business Continuity Clustering.

4.1.2 Business Continuity Cluster Component Locations

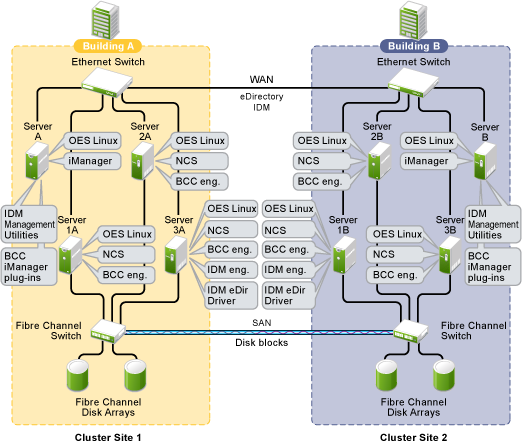

Figure 4-1 illustrates where the various components needed for a business continuity cluster are installed.

Figure 4-1 Business Continuity Cluster Component Locations

Figure 4-1 uses the following abbreviations:

- BCC: Novell Business Continuity Clustering 1.2.1 for OES 2 SP2 Linux

- eDir: Novell eDirectory 8.8.5

- IDM: Identity Manager 3.6.1 (32-bit or 64-bit)

- iManager: Novell iManager 2.7.3

- NCS: Novell Cluster Services 1.8.7 for OES 2 SP2 Linux, with the January 2010 patch

- OES Linux: Novell Open Enterprise Server 2 SP2 for Linux, with the January 2010 patch

4.1.3 OES 2 SP2 Linux

Novell Open Enterprise Server (OES) 2 Support Pack 2 (SP2) Linux must be installed and running on each node in every peer cluster that will be part of the business continuity cluster. The January 2010 patch is required.

See the OES 2 SP2: Linux Installation Guide for information on installing, configuring, and updating (patching) OES 2 SP2 Linux.

4.1.4 Novell Cluster Services 1.8.7 for Linux

You need two to four clusters with Novell Cluster Services 1.8.7 (the version that ships with OES 2 SP2 Linux), with the latest patches applied, installed and running on each node in the cluster.

See the OES 2 SP3: Novell Cluster Services 1.8.8 Administration Guide for Linux for information on installing, configuring, and managing Novell Cluster Services.

Consider the following when preparing your clusters for the business continuity cluster:

Cluster Names

Each cluster must have a unique name, even if the clusters reside in different Novell eDirectory trees. Clusters must not have the same name as any of the eDirectory trees in the business continuity cluster.

Storage

The storage requirements for Novell Business Continuity Clustering software are the same as for Novell Cluster Services. For more information, see the following in the OES 2 SP3: Novell Cluster Services 1.8.8 Administration Guide for Linux:

Some storage vendors require you to purchase or license their CLI (Command Line Interface) separately. The CLI for the storage system might not initially be included with your hardware.

Also, some storage hardware may not be SMI-S compliant and cannot be managed by using SMI-S commands.

eDirectory

The recommended configuration is to have each cluster in the same eDirectory tree but in different OUs (Organizational Units). BCC 1.2x for Linux supports only a single-tree setup.

Peer Cluster Credentials

To add or change peer cluster credentials, you must access iManager on a server that is in the same eDirectory tree as the cluster where you are adding or changing peer credentials.

4.1.5 Novell eDirectory 8.8.5

Novell eDirectory 8.8.5 is supported with Business Continuity Clustering 1.2.1. See the eDirectory 8.8.5 documentation for more information.

eDirectory Containers for Clusters

Each of the clusters that you want to add to a business continuity cluster should reside in its own OU level container. Each OU should reside in a different eDirectory partition.

As a best practice for each of the peer clusters, put its Server objects, Cluster object, Driver objects, and Landing Zone in a the same eDirectory partition.

For example:

|

ou=cluster1 |

ou=cluster2 |

ou=cluster3 |

|---|---|---|

|

ou=cluster1LandingZone |

ou=cluster2LandingZone |

ou=cluster3LandingZone |

|

cn=cluster1 |

cn=cluster2 |

cn=cluster3 |

|

c1server35 c1server36 c1server37 (IDM node with read/write access to ou=cluster1) |

c2server135 c2server136 c3server137 (IDM node with read/write access to ou=cluster2) |

c3server235 c3server236 c3server237 (IDM node with read/write access to ou=cluster3) |

|

cluster1BCCDrivers

|

cluster2BCCDrivers

|

cluster3BCCDrivers

|

Rights Needed for Installing BCC

The first time that you install the Business Continuity Clustering engine software in an eDirectory tree, the eDirectory schema is automatically extended with BCC objects.

IMPORTANT:The user who installs BCC must have the eDirectory credentials necessary to extend the schema.

If the eDirectory administrator username or password contains special characters (such as $, #, and so on), you might need to escape each special character by preceding it with a backslash (\) when you enter credentials for some interfaces.

Rights Needed for Individual Cluster Management

The BCC Administrator user is not automatically assigned the rights necessary to manage all aspects of each peer cluster. When managing individual clusters, you must log in as the Cluster Administrator user. You can manually assign the Cluster Administrator rights to the BCC Administrator user for each of the peer clusters if you want the BCC Administrator user to have all rights.

Rights Needed for BCC Management

Before you install BCC, create the BCC Administrator user and group identities in eDirectory to use when you manage the BCC. For information, see Section 4.3, Configuring a BCC Administrator User and Group.

Rights Needed for Identity Manager

The node where Identity Manager is installed must have an eDirectory full replica with at least Read/Write access to all eDirectory objects that will be synchronized between clusters. You can also have the eDirectory master running on the node instead of the replica.

The replica does not need to contain all eDirectory objects in the tree. The eDirectory full replica must have at least read/write access to the following containers in order for the cluster resource synchronization and user object synchronization to work properly:

-

The Identity Manager driver set container.

-

The container where the Cluster object resides.

-

The container where the Server objects reside.

If Server objects reside in multiple containers, this must be a container high enough in the tree to be above all containers that contain Server objects.

The best practice is to have all Server objects in one container.

-

The container where the cluster Pool objects and Volume objects are placed when they are synchronized to this cluster. This container is referred to as the landing zone. The NCP Server objects for the virtual server of a BCC-enabled resource are also placed in the landing zone.

-

The container where the User objects reside that need to be synchronized. Typically, the User objects container is in the same partition as the cluster objects.

IMPORTANT:Full eDirectory replicas are required. Filtered eDirectory replicas are not supported with this version of Business Continuity Clustering software.

4.1.6 SLP

You must have SLP (Server Location Protocol) set up and configured properly on each server node in every cluster. Typically, SLP is installed as part of the eDirectory installation and setup when you install the server operating system for the server. For information, see Configuring OpenSLP for eDirectory

in the Novell eDirectory 8.8 Administration Guide.

4.1.7 Identity Manager 3.6.1 Bundle Edition

The Identity Manager 3.6.1 Bundle Edition (32-bit or 64-bit) is required for synchronizing the configuration of the peer clusters in your business continuity cluster. It is not involved in other BCC management operations such as migrating cluster resources within or across peer clusters.

Before you install Business Continuity Clustering on the cluster nodes, make sure that Identity Manager and the Identity Manager driver for eDirectory are installed on one node in each peer cluster that you want to be part of the business continuity cluster.

The same Identity Manager installation program that is used to install the Identity Manager engine is also used to install the Identity Manager eDirectory driver and management utilities. See Business Continuity Cluster Component Locations for information on where to install Identity Manager components.

Downloading the Bundle Edition

The bundle edition is a limited release of Identity Manager 3.6.1 for OES 2 SP2 Linux that allows you to use the Identity Manager software, the eDirectory driver, and the Identity Manager management tools for Novell iManager 2.7.3. BCC driver templates are applied to the eDirectory driver to create BCC-specific drivers that automatically synchronize BCC configuration information between the Identity Manager nodes in peer clusters.

To download Identity Manager, go to to the Novell Downloads Web site.

Credential for Drivers

The Bundle Edition requires a credential that allows you to use drivers beyond an evaluation period. The credential can be found in the BCC license. In the Identity Manager interface in iManager, enter the credential for each driver that you create for BCC. You must also enter the credential for the matching driver that is installed in a peer cluster. You can enter the credential, or put the credential in a file that you point to.

Identity Manager Engine and eDirectory Driver

BCC 1.2.1 requires Identity Manager 3.6.1 or later to run on one node in each of the peer clusters that belong to the business continuity cluster. (Identity Manager was formerly called DirXML.) Identity Manager should not be set up as clustered resource. Each Identity Manager node must be online in its peer cluster and Identity Manager must be running properly whenever you attempt to modify the BCC configuration or manage the BCC-enabled cluster resources.

For installation instructions, see the Identity Manager 3.6.1 Installation Guide.

The node where the Identity Manager engine and the eDirectory driver are installed must have an eDirectory full replica with at least Read/Write access to all eDirectory objects that will be synchronized between clusters. This does not apply to all eDirectory objects in the tree. For information about the eDirectory full replica requirements, see Section 4.1.5, Novell eDirectory 8.8.5.

Identity Manager Driver for eDirectory

On the same node where you install the Identity Manager engine, install one instance of the Identity Manager driver for eDirectory.

For information about installing the Identity Manager driver for eDirectory, see Identity Manager 3.6.x Driver for eDirectory: Implementation Guide.

Identity Manager Management Utilities

The Identity Manager management utilities must be installed on the same server as Novell iManager. The Identity Manager utilities and iManager can be installed on a cluster node, but installing them on a non-cluster node is the recommended configuration. For information about iManager requirements for BCC, see Section 4.1.8, Novell iManager 2.7.3.

IMPORTANT:Identity Manager plug-ins for iManager require that eDirectory is running and working properly in the tree. If the plug-in does not appear in iManager, make sure that the eDirectory daemon (ndsd) is running on the server that contains the eDirectory master replica.

To restart ndsd on the master replica server, enter the following command at its terminal console prompt as the root user:

rcndsd restart

4.1.8 Novell iManager 2.7.3

Novell iManager 2.7.3 (the version released with OES 2 SP2 Linux with the latest patches and plug-ins applied) must be installed and running on a server in the eDirectory tree where you are installing Business Continuity Clustering software. You need to install the BCC plug-in, the Clusters plug-in, and the Storage Management plug-in in order to manage the BCC in iManager. As part of the install process, you must also install plug-ins for the Identity Manager role that are management templates for configuring a business continuity cluster. The templates are in the novellbusiness-continuity-cluster-idm.rpm module.

For information about installing and using iManager, see the Novell iManager 2.7x documentation Web site.

The Identity Manager management utilities must be installed on the same server as iManager. You can install iManager and the Identity Manager utilities on a cluster node, but installing them on a non-cluster node is the recommended configuration. For information about Identity Manager requirements for BCC, see Section 4.1.7, Identity Manager 3.6.1 Bundle Edition.

See Business Continuity Cluster Component Locations for specific information on where to install Identity Manager components.

4.1.9 Storage-Related Plug-Ins for iManager 2.7.3

The Clusters plug-in (ncsmgmt.npm) for OES 2 SP2 Linux provides support for BCC 1.2.1. You must install the Clusters plug-in and the Storage Management plug-in (storagemgmt.npm).

IMPORTANT:The Storage Management plug-in module (storagemgmt.npm) contains common code required by all of the other storage-related plug-ins. Make sure that you include storagemgmt.npm when installing any of the others. If you use more than one of these plug-ins, you should install, update, or remove them all at the same time to make sure the common code works for all plug-ins.

Other storage-related plug-ins are Novell Storage Services (NSS) (nssmgmt.npm), Novell AFP (afpmgmt.npm), Novell CIFS (cifsmgmt.npm), Novell Distributed File Services (dfsmgmt.npm), and Novell Archive and Version Services (avmgmt.npm). NSS is required in order to use shared NSS pools as cluster resources. The other services are optional.

The Storage Related Administration Plug-ins for iManager 2.7.3 are available as a zipped download file on the Novell Downloads Web site.

To install or upgrade the Clusters plug-in:

-

On the iManager server, if the OES 2 version of the storage-related plug-ins are installed, or if you upgraded this server from OES 2 Linux or NetWare 6.5 SP7, log in to iManager, then uninstall all of the storage-related plug-ins that are currently installed, including storagemgmt.npm.

This step is necessary for upgrades only if you did not uninstall and reinstall the storage-related plug-ins as part of the upgrade process for OES 2 SP1 Linux.

-

Copy the new .npm files into the iManager plug-ins location, manually overwriting the older version of the plug-in in the packages folder with the newer version of the plug-in.

-

In iManager, install all of the storage-related plug-ins, or install the plug-ins you need, plus the common code.

-

Restart Tomcat by entering the following command at a terminal console prompt:

rcnovell-tomcat5 restart

-

Restart Apache by entering the following command at a terminal console prompt.

rcapache2 restart

4.1.10 OpenWBEM

OpenWBEM must be running and configured to start using chkconfig. For information, see the OES 2: OpenWBEM Services Administration Guide.

The CIMOM daemons on all nodes in the business continuity cluster must be configured to bind to all IP addresses on the server. For information, see Section 10.5, Configuring CIMOM Daemons to Bind to IP Addresses.

Port 5989 is the default setting for secure HTTP (HTTPS) communications. If you are using a firewall, the port must be opened for CIMOM communications.

Beginning in OES 2, the Clusters plug-in (and all other storage-related plug-ins) for iManager require CIMOM connections for tasks that transmit sensitive information (such as a username and password) between iManager and the _admin volume on the OES 2 server that you are managing. Typically, CIMOM is running, so this should be the normal condition when using the server. CIMOM connections use Secure HTTP (HTTPS) for transferring data, and this ensures that sensitive data is not exposed.

If CIMOM is not currently running when you click or for the task that sends the sensitive information, you get an error message explaining that the connection is not secure and that CIMOM must be running before you can perform the task.

IMPORTANT:If you receive file protocol errors, it might be because WBEM is not running.

To check the status of WBEM:

-

Log in as the root user in a terminal console, then enter

rcowcimomd status

To start WBEM:

-

Log in as the root user in a terminal console, then enter

rcowcimomd start

4.1.11 Shared Disk Systems

For Business Continuity Clustering, a shared disk storage system is required for each peer cluster in the business continuity cluster. See Shared Disk Configuration Requirements

in the OES 2 SP3: Novell Cluster Services 1.8.8 Administration Guide for Linux.

In addition to the shared disks in an original cluster, you need additional shared disk storage in the other peer clusters to mirror the data between sites as described in Section 4.1.12, Mirroring Shared Disk Systems Between Peer Clusters.

4.1.12 Mirroring Shared Disk Systems Between Peer Clusters

The Business Continuity Clustering software does not perform data mirroring. You must separately configure either storage-based mirroring or host-based file system mirroring for the shared disks that you want to fail over between peer clusters. Storage-based synchronized mirroring is the preferred solution.

IMPORTANT:Use whatever method is available to implement storage-based mirroring or host-based file system mirroring between the peer clusters for each of the shared disks that you plan to fail over between peer clusters.

For information about how to configure host-based file system mirroring for Novell Storage Services pool resources, see Section C.0, Configuring Host-Based File System Mirroring for NSS Pools.

For information about storage-based mirroring, consult the vendor for your storage system or see the vendor documentation.

4.1.13 LUN Masking for Shared Devices

LUN masking is the ability to exclusively assign each LUN to one or more host connections. With it, you can assign appropriately sized pieces of storage from a common storage pool to various servers. See your storage system vendor documentation for more information on configuring LUN masking.

When you create a Novell Cluster Services system that uses a shared storage system, it is important to remember that all of the servers that you grant access to the shared device, whether in the cluster or not, have access to all of the volumes on the shared storage space unless you specifically prevent such access. Novell Cluster Services arbitrates access to shared volumes for all cluster nodes, but cannot protect shared volumes from being corrupted by non-cluster servers.

Software included with your storage system can be used to mask LUNs or to provide zoning configuration of the SAN fabric to prevent shared volumes from being corrupted by non-cluster servers.

IMPORTANT:We recommend that you implement LUN masking in your business continuity cluster for data protection. LUN masking is provided by your storage system vendor.

4.1.14 Link Speeds

For real-time mirroring, link latency is the essential consideration. For best performance, the link speeds should be at least 1 GB per second, and the links should be dedicated.

Many factors should be considered for distances greater than 200 kilometers, some of which include:

-

The amount of data being transferred

-

The bandwidth of the link

-

Whether or not snapshot technology is being used for data replication

4.1.15 Ports

If you are using a firewall, the ports must be opened for OpenWBEM and the Identity Manager drivers.

Table 4-1 Default Ports for the BCC Setup

|

Product |

Default Port |

|---|---|

|

OpenWBEM |

5989 (secure) |

|

eDirectory driver |

8196 |

|

Cluster Resources Synchronization driver |

2002 (plus the ports for additional instances) |

|

User Object Synchronization driver |

2001 (plus the ports for additional instances) |

4.1.16 Web Browser

When using iManager, make sure your Web browser settings meet the requirements in this section.

Web Browser Language Setting

The iManager plug-in might not operate properly if the highest priority Language setting for your Web browser is set to a language other than one of iManager's supported languages. To avoid problems, in your Web browser, click > > , then set the first language preference in the list to a supported language.

Refer to the Novell iManager documentation for information about supported languages.

Web Browser Character Encoding Setting

Supported language codes are Unicode (UTF-8) compliant. To avoid display problems, make sure the Character Encoding setting for the browser is set to Unicode (UTF-8) or ISO 8859-1 (Western, Western European, West European).

In a Mozilla browser, click > , then select the supported character encoding setting.

In an Internet Explorer browser, click > , then select the supported character encoding setting.