13.4 Configuring an LVM Cluster Resource with LVM Commands and the Generic File System Template

This section describes how to use Linux Logical Volume Manager (LVM) commands to create a shared LVM volume group. You use the Generic File System template (Generic_FS_Template) to create a volume group cluster resource that cluster-enables the exiting LVM volume group.

After you create the resource, you can add lines to its load script, unload script, and monitor script to customize the resource for other uses. Compare the Generic_FS_Template to the resource template for your product to determine which lines need to be added or modified.

13.4.1 Creating a Shared LVM Volume with LVM Commands

You can create a shared LVM volume group and logical volume by using native Linux LVM commands.

Sample Values for the LVM Volume Group and Logical Volume

The procedures in this section uses the following sample parameters. Ensure that you replace the sample values with your values.

|

Parameter |

Sample Value |

|---|---|

|

LVM physical volume |

/dev/sdd For clustering, we recommend only one device in the LVM volume group. |

|

LVM volume group name |

clustervg01 |

|

LVM logical volume |

clustervol01 |

|

File system type |

ext3 This is the file system type that you make on the LVM logical volume, such as btrfs, ext2, ext3, reiserfs, or xfs. For information about mkfs command options for each file system type, see the mkfs(8) man page. |

|

Logical volume path |

/dev/clustervg01/clustervol01 |

|

Mount point for the logical volume |

/mnt/clustervol01 |

Creating a Shared LVM Volume Group (Quick Reference)

You can create the volume group and logical volume by issuing the following LVM commands as the root user on the cluster node.

Before you begin, initialize the SAN device as described in Section 13.2, Initializing a SAN Device.

When you are done, continue with Section 13.4.2, Creating a Generic File System Cluster Resource for an LVM Volume Group.

|

Action |

Command |

|---|---|

|

1. Create the LVM physical volume. |

pvcreate <device>

|

|

2. Create the clustered LVM volume group. |

vgcreate -c y <vg_name> <device> |

|

3. Activate the volume group exclusively on the node. |

vgchange -a ey <vg_name>

|

|

4. Create the LVM logical volume. |

lvcreate -n <lv_name> -L size <vg_name> |

|

5. Add a file system to the LVM logical volume. |

mkfs -t <fs_type> /dev/<vg_name>/<lv_name> [fs_options] |

|

6. If it does not exist, create the mount point path. |

mkdir -p <full_mount_point_path>

|

|

7. Mount the LVM logical volume. |

mount -t <fs_type> /dev/<vg_name>/<lv_name> <mount_point> |

|

8. Dismount the LVM logical volume and deactivate the volume group. After you create the Generic LVM cluster resource, use the cluster resource to control when the volume is online or offline. |

umount /dev/<vg_name>/<lv_name> vgchange -a n <vg_name> |

Creating a Shared LVM Volume Group (Detailed Instructions)

For detailed instructions, use the following procedure to create the LVM volume group and logical volume:

-

Log in as the Linux root user to the first node of the cluster, then open a terminal console.

-

Initialize the SAN device as described in Section 13.2, Initializing a SAN Device.

LVM allows only one device per volume group.

-

Create an LVM physical volume on the device (such as /dev/sdd) by entering:

pvcreate <device>For example:

pvcreate /dev/sdd No physical volume label read from /dev/sdd Physical volume "/dev/sdd" successfully created

-

Display information about the physical volume by entering:

pvdisplay [device]For example, to view information about /dev/sdd:

pvdisplay /dev/sdd "/dev/sdd" is a new physical volume of "512 MB" --- NEW Physical volume --- PV Name /dev/sdd VG Name PV Size 512 MB Allocatable NO PE Size (KByte) 0 Total PE 0 Free PE 0 Allocated PE 0 PV UUID dg5WG9-IJvq-MkEM-iRrC-hMKv-Zmwq-YY0N3m

-

Create an LVM volume group (such as clustervg01) on the physical volume by entering:

vgcreate -c y <vg_name> <device>

For example:

vgcreate -c y "clustervg01" /dev/sdd Clustered volume group "clustervg01" successfully created

The volume group is automatically activated.

-

Activate the volume group exclusively on the current server by entering:

vgchange -a ey <vg_name>The -a option activates the volume. The ey parameter specifies the values exclusively and yes.

For example:

vgchange -a ey clustervg01 logical volume(s) in volume group "clustervg01" now active

-

View information about the volume group by using the vgdisplay command.

vgdisplay <vg_name>Notice that 4 MB of the device is used for the volume group’s Physical Extent (PE) table. You must consider this reduction in available space on the volume group when you specify the size of the LVM logical volume in the next step (Step 8).

For example:

vgdisplay clustervg01 --- Volume group --- VG Name clustervg01 System ID Format lvm2 Metadata Areas 1 Metadata Sequence No 1 VG Access read/write VG Status resizable MAX LV 0 Cur LV 0 Open LV 0 Max PV 0 Cur PV 1 Act PV 1 VG Size 508.00 MB PE Size 4.00 MB Total PE 127 Alloc PE / Size 0 / 0 Free PE / Size 127 / 508.00 MB VG UUID rqyAd3-U2dg-HYLw-0SyN-loO7-jBH3-qHvySe

-

Create an LVM logical volume (such as clustervol01) on the volume group by entering:

lvcreate -n <lv_name> -L size <vg_name>

Specify the logical volume name, size, and the name of the volume group where you want to create it. The size is specified in megabytes by default.

The logical volume full path name is /dev/<vg_name>/<lv_name>.

For example:

lvcreate -n "clustervol01" -L 500 "clustervg01" Logical volume "clustervol01" created

This volume’s full path name is /dev/clustervg01/clustervol01.

-

View information about the logical volume by entering:

lvdisplay -v /dev/<vg_name>/<lv_name>

For example:

lvdisplay -v /dev/clustervg01/clustervol01 Using logical volume(s) on command line --- Logical volume --- LV Name /dev/clustervg01/clustervol01 VG Name clustervg01 LV UUID nIfsMp-alRR-i4Lw-Wwdt-v5io-2hDN-qrWTLH LV Write Access read/write LV Status available # open 0 LV Size 500.00 MB Current LE 125 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 1024 Block device 253:1 -

Create a file system (such as Btrfs, Ext2, Ext3, ReiserFS, or XFS) on the LVM logical volume by entering:

mkfs -t <fs_type> /dev/<vg_name>/<lv_name> [fs_options]

For example:

mkfs -t ext3 /dev/clustervg01/clustervol01 mke2fs 1.41.9 (22-Aug-2009) Filesystem label= OS type: Linux Block size=1024 (log=0) Fragment size=1024 (log=0) 128016 inodes, 512000 blocks 25600 blocks (5.00%) reserved for the super user First data block=1 Maximum filesystem blocks=67633152 63 block groups 8192 blocks per group, 8192 fragments per group 2032 inodes per group Superblock backups stored on blocks: 8193, 24577, 40961, 57345, 73729, 204801, 221185, 401409 Writing inode tables: done Creating journal (8192 blocks): done Writing superblocks and filesystem accounting information: done This filesystem will be automatically checked every 29 mounts or 180 days, whichever comes first. Use tune2fs -c or -i to override. -

If the mount point does not exist, create the full directory path for the mount point.

mkdir -p <full_mount_point_path>For example:

mkdir -p /mnt/clustervol01

-

Mount the logical volume on the desired mount point by entering:

mount -t <fs_type> /dev/<vg_name>/<lv_name> <mount_point>

For example:

mount -t ext3 /dev/clustervg01/clustervol01 /mnt/clustervol01

-

Dismount the volume and deactivate the LVM volume group by entering:

umount /dev/<vg_name>/<lv_name> vgchange -a n "vg_name"

This allows you to use the load script and unload script to control when the volume group is activated or deactivated in the cluster.

For example, to unmount the volume and deactivate the clustervg01 volume group, enter

umount /dev/clustervg01/clustervol01 vgchange -a n "clustervg01" 0 logical volume(s) in volume group "clustervg01" now active

-

Continue with Section 13.4.2, Creating a Generic File System Cluster Resource for an LVM Volume Group.

13.4.2 Creating a Generic File System Cluster Resource for an LVM Volume Group

You can use the Novell Cluster Services Generic File System template (Generic_FS_Template) to create a cluster resource for the LVM volume group.

-

Ensure that the SAN device is attached to all of the nodes in the cluster.

-

Ensure that the existing LVM volume you want to cluster-enable is deactive on all nodes in the cluster.

See Step 13 in Section 13.4.1, Creating a Shared LVM Volume with LVM Commands.

-

In iManager, select Clusters > My Clusters.

-

Select the cluster you want to manage, then click Cluster Options.

-

Click the New link.

-

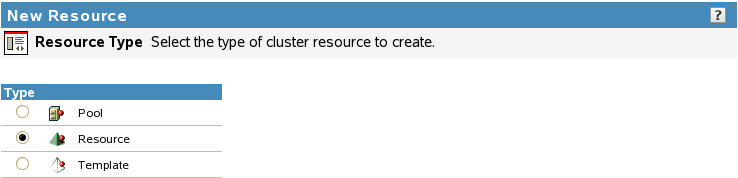

Click the Resource radio button, then click Next.

-

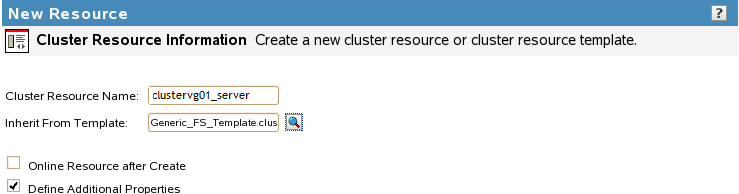

Specify information to define your new cluster resource:

-

Specify the name of the resource you want to create, such as clustervg01.

Do not use periods in cluster resource names. Novell clients interpret periods as delimiters. If you use a space in a cluster resource name, that space is converted to an underscore.

-

In the Inherit From Template field, browse to select the Generic_FS_Template.

-

Deselect or select Online Resource after Create.

This option is deselected by default. Deselect this option to allow an opportunity to verify the scripts and resource policies before you bring the resource online for the first time.

Select this option to automatically online the resource on its most preferred node as soon as it is created and configured. The most preferred node is the first server in the assigned nodes list for the resource. You should also select the Define Additional Properties check box to configure the resource now.

-

Select the Define Additional Properties check box.

When this option is enabled, a wizard walks you through the load, unload, and monitor scripts, then allows you to set up the policies and preferred nodes for the cluster resource.

-

Click Next.

If you selected Define Additional Properties, continue with the setup. Otherwise, select the resource in the Cluster Objects list to open its properties page, and configure the resource.

-

-

Configure the load script for the resource by replacing the variables with your own values, specify the Load Script Timeout value, then click Next.

The following is the default Generic_FS template load script. The variable values must be specified before you can successfully bring the resource online. Be mindful that Linux path names are case sensitive. The mount path must already exist. You can add a mkdir command in the script for the mount point, or create the directory manually on each node before you allow the resource to fail over to other nodes. A sample script is available in Sample Generic LVM Resource Load Script.

#!/bin/bash . /opt/novell/ncs/lib/ncsfuncs # define the IP address RESOURCE_IP=a.b.c.d # define the file system type MOUNT_FS=ext3 #define the volume group name VOLGROUP_NAME=name # define the device MOUNT_DEV=/dev/$VOLGROUP_NAME/volume_name # define the mount point MOUNT_POINT=/mnt/mount_point #activate the volume group exit_on_error vgchange -a ey $VOLGROUP_NAME # create the mount point on the node if it does not exist ignore_error mkdir -p $MOUNT_POINT # mount the file system exit_on_error mount_fs $MOUNT_DEV $MOUNT_POINT $MOUNT_FS # add the IP address exit_on_error add_secondary_ipaddress $RESOURCE_IP exit 0

-

Configure the unload script for the resource by replacing the variables with your own values, specify the Unload Script Timeout value, then click Next.

The following is the default Generic_FS template unload script. The variable values must be specified before you bring the resource online. Be mindful that Linux path names are case sensitive. A sample script is available in Sample Generic LVM Resource Unload Script.

#!/bin/bash . /opt/novell/ncs/lib/ncsfuncs # define the IP address RESOURCE_IP=a.b.c.d # define the file system type MOUNT_FS=ext3 #define the volume group name VOLGROUP_NAME=name # define the device MOUNT_DEV=/dev/$VOLGROUP_NAME/volume_name # define the mount point MOUNT_POINT=/mnt/mount_point # del the IP address ignore_error del_secondary_ipaddress $RESOURCE_IP # unmount the volume sleep 10 # if not using SMS for backup, please comment out this line exit_on_error umount_fs $MOUNT_DEV $MOUNT_POINT $MOUNT_FS #deactivate the volume group exit_on_error vgchange -a n $VOLGROUP_NAME # return status exit 0

-

Configure the monitor script for the resource by replacing the variables with your own values, specify the Monitor Script Timeout value, then click Next.

The following is the default Generic_FS template monitor script. Be mindful that Linux path names are case sensitive. The variable values must be specified before you bring the resource online. A sample script is available in Sample Generic LVM Resource Monitor Script.

#!/bin/bash . /opt/novell/ncs/lib/ncsfuncs # define the IP address RESOURCE_IP=a.b.c.d # define the file system type MOUNT_FS=ext3 #define the volume group name VOLGROUP_NAME=name # define the device MOUNT_DEV=/dev/$VOLGROUP_NAME/volume_name # define the mount point MOUNT_POINT=/mnt/mount_point #check the logical volume exit_on_error status_lv $MOUNT_DEV # test the file system exit_on_error status_fs $MOUNT_DEV $MOUNT_POINT $MOUNT_FS # status the IP address exit_on_error status_secondary_ipaddress $RESOURCE_IP exit 0

-

On the Resource Policies page, view and modify the resource’s Policy settings:

-

(Optional) Select the Resource Follows Master check box if you want to ensure that the resource runs only on the master node in the cluster.

If the master node in the cluster fails, the resource fails over to the node that becomes the master.

-

(Optional) Select the Ignore Quorum check box if you don’t want the cluster-wide timeout period and node number limit enforced.

The quorum default values were set when you installed Novell Cluster Services. You can change the quorum default values by accessing the properties page for the Cluster object.

Selecting this box ensures that the resource is launched immediately on any server in the Preferred Nodes list as soon as any server in the list is brought online.

-

(Optional) By default, the Generic File System resource template sets the Start mode and Failover mode to Auto and the Failback Mode to Disable. You can change the default settings as needed.

-

Start Mode: If the Start mode is set to Auto, the resource automatically loads on a designated server when the cluster is first brought up. If the Start mode is set to Manual, you can manually start the resource on a specific server when you want, instead of having it automatically start when servers in the cluster are brought up.

-

Failover Mode: If the Failover mode is set to Auto, the resource automatically moves to the next server in the Preferred Nodes list if there is a hardware or software failure. If the Failover mode is set to Manual, you can intervene after a failure occurs and before the resource is started on another node.

-

Failback Mode: If the Failback mode is set to Disable, the resource continues running on the node it has failed to. If the Failback mode is set to Auto, the resource automatically moves back to its preferred node when the preferred node is brought back online. Set the Failback mode to Manual to prevent the resource from moving back to its preferred node when that node is brought back online, until you are ready to allow it to happen.

-

-

Click Next.

-

-

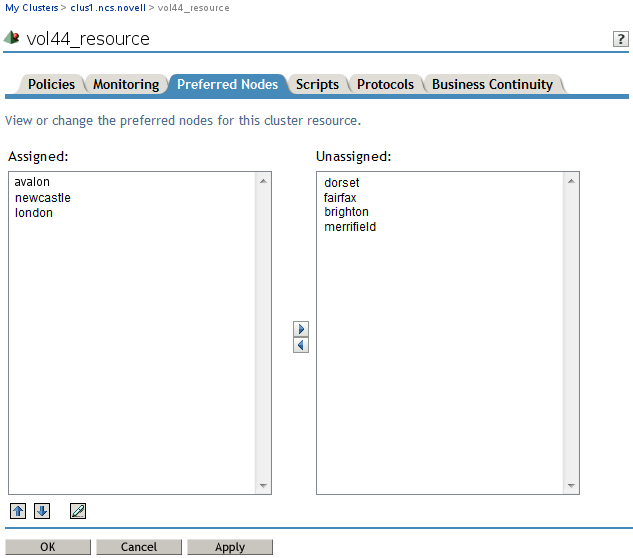

On the Preferred Nodes page, assign preferred nodes for the resource by moving them from the Unassigned list to the Assigned list.

When you bring a resource online, it is automatically loaded on the most preferred node in the list. If the node is not available, the other nodes are tried in the order that they appear in the list. You can modify the order of the nodes by clicking the Edit (pen) icon to open the list a text editor. In the editor, click OK to close the editor.

-

Click Finish.

Typically, the resource creation takes less than 10 seconds. However, if you have a large tree or if the server does not hold an eDirectory replica, the create time can take up to 3 minutes.

-

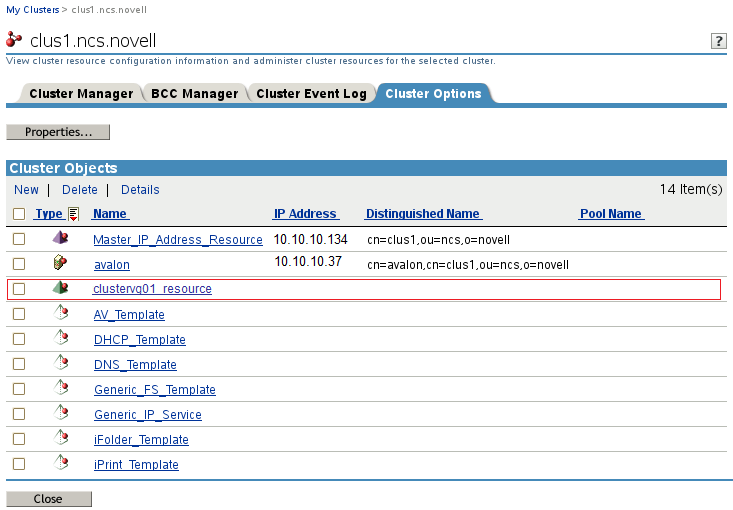

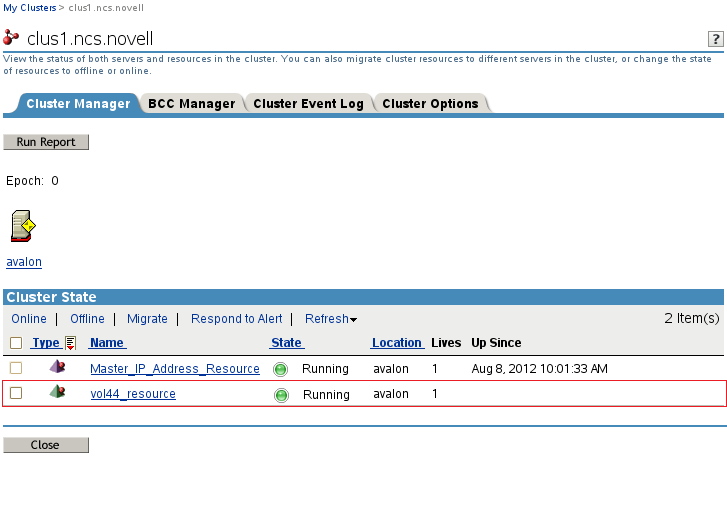

Verify that the resource was created by viewing its entry in the Cluster Objects list on the Cluster Options page.

-

(Optional) Enable and configure resource monitoring:

Resource monitoring is disabled by default. Enable the monitoring function and modify the settings for the resource. For detailed information, see Section 10.7, Enabling Monitoring and Configuring the Monitor Script.

-

In iManager on the Cluster Manager page or Cluster Options page, select the resource name link to open its Properties dialog box, then select the Monitoring tab.

-

Select the Enable Resource Monitoring check box to enable resource monitoring for the selected resource.

-

Specify the Polling Interval to control how often you want the resource monitor script for this resource to run.

You can specify the value in minutes or seconds. See Polling Interval.

-

Specify the number of failures (Maximum Local Failures) for the specified amount of time (Time Interval).

See Failure Rate.

-

Specify the Failover Action by indicating whether you want the resource to be set to a comatose state, to migrate to another server, or to reboot the hosting node (without synchronizing or unmounting the disks) if a failure action initiates. The reboot option is normally used only for a mission-critical cluster resource that must remain available.

See Failure Action.

-

-

Bring the resource online:

-

Select Clusters > My Clusters.

-

On the Cluster Manager page, select the check box next to the new CSM cluster resource, then click Online.

Ensure that the resource successfully enters the Running state. If the resource goes comatose instead, it is probably because you made an error when typing values in the script definitions. Typical issues are that the target LVM volume is still active locally on a node, the path names were not entered as case sensitive, the IP address is not unique in the network, or the mount path does not exist.

Take the resource offline, go to the resource’s Properties > Scripts page to review and modify its scripts as needed to correct errors, then try again to online the resource.

-

13.4.3 Sample Generic LVM Resource Scripts

This section contains sample scripts for the Generic LVM resource.

The sample scripts in this section use the following sample parameters. Ensure that you replace the sample values with your values.

|

Parameter |

Sample Value |

|---|---|

|

RESOURCE_IP |

10.10.10.136 |

|

MOUNT_FS |

ext3 |

|

VOLGROUP_NAME |

clustervg01 |

|

MOUNT_DEV |

/dev/$VOLGROUP_NAME/clustervol01 |

|

MOUNT_POINT |

/mnt/clustervol01 |

Sample Generic LVM Resource Load Script

Use the following sample load script to complete the fields for your LVM volume group cluster resource:

#!/bin/bash . /opt/novell/ncs/lib/ncsfuncs # define the IP address RESOURCE_IP=10.10.10.136 # define the file system type MOUNT_FS=ext3 # define the volume group name VOLGROUP_NAME=clustervg01 # define the device MOUNT_DEV=/dev/$VOLGROUP_NAME/clustervol01 # define the mount point MOUNT_POINT=/mnt/clustervol01 # activate the volume group exit_on_error vgchange -a ey $VOLGROUP_NAME # create the mount point on the node if it does not exist ignore_error mkdir -p $MOUNT_POINT # mount the file system exit_on_error mount_fs $MOUNT_DEV $MOUNT_POINT $MOUNT_FS # add the IP address exit_on_error add_secondary_ipaddress $RESOURCE_IP exit 0

Sample Generic LVM Resource Unload Script

Use the following sample unload script to complete the fields for your LVM volume group cluster resource:

#!/bin/bash . /opt/novell/ncs/lib/ncsfuncs # define the IP address RESOURCE_IP=10.10.10.136 # define the file system type MOUNT_FS=ext3 # define the volume group name VOLGROUP_NAME=clustervg01 # define the device MOUNT_DEV=/dev/$VOLGROUP_NAME/clustervol01 # define the mount point MOUNT_POINT=/mnt/clustervol01 # del the IP address ignore_error del_secondary_ipaddress $RESOURCE_IP # unmount the volume sleep 10 # if not using SMS for backup, please comment out this line exit_on_error umount_fs $MOUNT_DEV $MOUNT_POINT $MOUNT_FS # deactivate the volume group exit_on_error vgchange -a n $VOLGROUP_NAME exit 0

Sample Generic LVM Resource Monitor Script

Use the following sample monitor script to complete the fields for your LVM volume group cluster resource. To use the script, you must also enable monitoring for the resource. See Section 10.7, Enabling Monitoring and Configuring the Monitor Script.

#!/bin/bash . /opt/novell/ncs/lib/ncsfuncs # define the IP address RESOURCE_IP=10.10.10.136 # define the file system type MOUNT_FS=ext3 # define the volume group name VOLGROUP_NAME=clustervg01 # define the device MOUNT_DEV=/dev/$VOLGROUP_NAME/clustervol01 # define the mount point MOUNT_POINT=/mnt/clustervol01 # check the logical volume exit_on_error status_lv $MOUNT_DEV # test the file system exit_on_error status_fs $MOUNT_DEV $MOUNT_POINT $MOUNT_FS # status the IP address exit_on_error status_secondary_ipaddress $RESOURCE_IP exit 0